The hidden bias in readability tools

Common AI reader tools are based on long-flawed methods used to make text accessible

Around the world, writers are told to “make it plain.”

Editors prize short sentences, simple words, and content written at roughly a sixth- to eighth-grade reading level. The idea is noble: simplicity makes information accessible. Whether it’s a quick news article, an opinion piece, or a complex health story clear writing is meant to ensure that everyone can understand.

But in the age of algorithms, clarity

is no longer judged by humans alone. Tools like Microsoft Word’s Flesch-Kincaid scale, Grammarly’s readability meter, and similar digital systems now assign numerical scores to text, deciding whether writing is “easy” or “difficult.” In practice, they have quietly become gatekeepers of what counts as accessible writing.

These tools are far from neutral. The algorithms carry hidden biases that shape how ideas are expressed, whose voices are amplified, and which kinds of language are valued.

Readability tools do not simply measure clarity; they actively shape it.

The original sin of measuring readability

The origins of readability formulas trace back to mid-20th-century America.

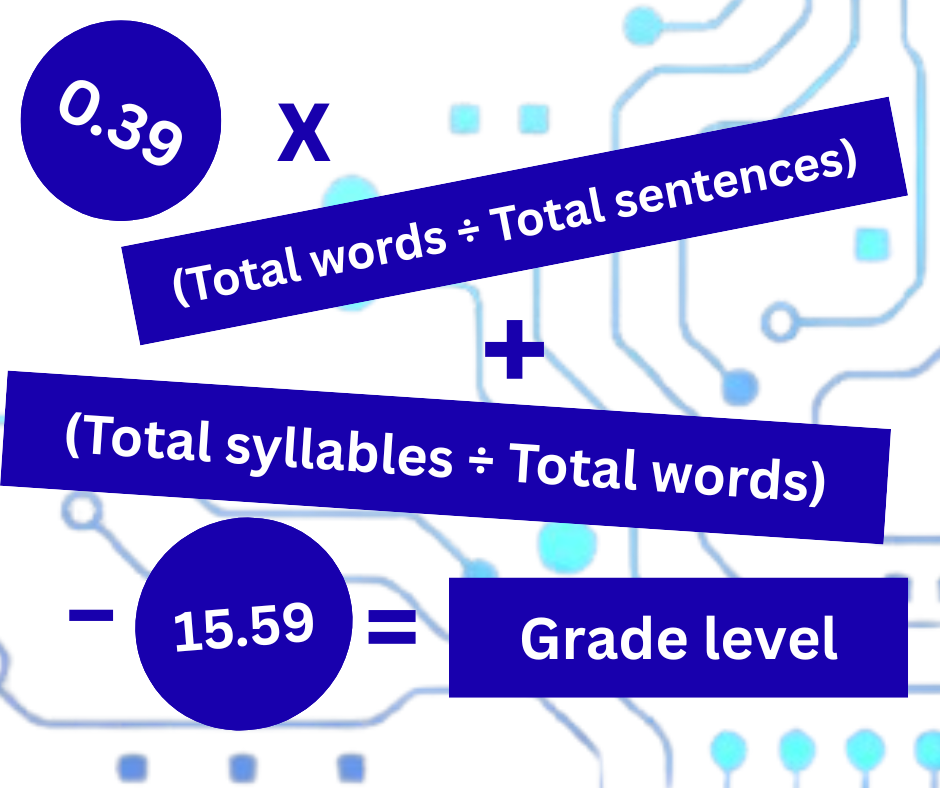

In 1948, Rudolf Flesch published A New Readability Yardstick, measuring sentence length and syllable count to evaluate training manuals. Nearly three decades later, J. Peter Kincaid and colleagues adapted the general approach for the U.S. Navy, producing the now-ubiquitous Flesch-Kincaid Grade Level formula. These methods were built for technical documents, not essays, fiction, or health guides — and definitely not news or other types of journalism. They were calibrated on monolingual, Western English texts. From the start, they privileged a narrow stylistic norm.

Writers who use figurative language, complex syntax, or culturally specific expressions are often penalized. A Caribbean idiom or an Indigenous storytelling cadence can register as “hard to read.” The algorithm flags difference as difficulty. A 2024 research in PNAS Nexus notes, even advanced language models carry cultural bias, underestimating the readability of non-Western linguistic structures.

Linguists such as Scott Crossley and Danielle McNamara have shown that surface metrics like word length and sentence count miss what actually drives comprehension: cohesion, logic, and context. A perfectly “simple” text can still confuse readers if ideas don’t connect. Studies of bilingual readers reveal that formulas like Flesch-Kincaid produce wildly inconsistent results, since what feels “complex” in English may be perfectly natural elsewhere.

Context and relevance make meaning accessible

The Plain Language Association International argues that true understanding depends far more on cultural relevance and structure than on sentence length. As Karen Schriver, a leading document-design scholar, puts it, a text can score well on readability but still fail to communicate.

Across institutions, experts increasingly caution that clarity should support meaning, not erase it. Nevertheless, readability scores have become shorthand for accessibility. Editorial software and content platforms often privilege texts that hit the right “grade level,” subtly pressuring writers to flatten tone, cultural texture, or intellectual depth. This effect lands hardest on marginalized voices. Non-Western writers, technical experts, and disabled authors who use adaptive syntax can all be sidelined by systems that equate “easy” with “normal.”

Does this article leave you with lingering questions? Did this story change your way of thinking? We want to know.

In disability studies, scholars such as M. Reni Yergeau argue that neurodivergent writing, common among people with autism or ADHD, often resists linear, simplified patterns. Loops, tangents, or associative rhythms may reflect authentic cognition rather than confusion. A paragraph that feels “complex” to an algorithm might, in fact, resonate deeply with readers who think similarly.

Guidelines on avoiding ableist language, like those outlined by Kristen Bottema-Beutel and colleagues warn that readability formulas can pathologize neurodivergent expression. Reviews of neuroqueer rhetoric highlight demi-rhetorics

: styles that break from standard logic to honor different cognitive realities. Seen through this lens, simplicity as a universal standard becomes an ableist fiction.

Sociologist Boaventura de Sousa Santos calls for cognitive justice, the right to produce and encounter knowledge in diverse cognitive and cultural forms. Under this framework, readability tools should be guides, not judges. They can help writers communicate, but they should never dictate the only acceptable form of clarity.

Technology itself isn’t the villain.

Artificial intelligence already shapes tone and structure in modern writing software. But if AI models continue to train mostly on Western, monolingual data, they will keep reinforcing a single dominant style. Scholars in computational linguistics, like Dirk Hovy argue that inclusivity requires retraining algorithms on multilingual and neurodiverse samples, measuring not just conformity but engagement and comprehension.

True clarity is not about short sentences or plain words; it is about reachability — meeting readers where they are, acknowledging culture, experience, and cognition. Readability tools can still be valuable, but only if their limits are transparent. Used thoughtfully, they help writers refine communication. Used uncritically, they risk flattening language, culture, and identity into a single measurable style.

The path forward demands not just better software but a broader vision. We must recognize that true accessibility is not uniform simplicity, but the freedom to express and to understand ideas in many forms.

Kehinde Adepetun is a freelance journalist and communications professional. She writes about inclusive storytelling, tech, and culture. You can connect with her on LinkedIn and follow her work on substack.

This in an opinion. While this piece contains factual information, it is the author’s point of view.

Other recent Words

Get more from The Word — sent right to your inbox

Get behind the articles with thoughts from the writers and editors with our newsletter, The Word: Etymology. You’ll receive it monthly when we publish the new issue of The Word.

The Word is free, but great journalism isn’t

Love what you’re reading? We keep it free to read and never use a paywall. How do we do that? With the help of donors like you. We call our donors Word Patrons, and you can join for as little as $5 a month.